Investigation into Organized Criminal Networks: AI-Produced Fraud Materials

In the "pig butchering scam" industry, fraudsters rely on a large volume of audio, video, and text materialsto support their fraudulent activities. These materials include, but are not limited to, fake personal profiles, scam scripts, chat dialogues, landscape photos, and personal portraits. Fraud practitioners commonly refer to these as "materials".

Over the past few years, the rapid expansion of online fraud has created massive demand for such materials, leading to the emergence of specialized personnel dedicated to collecting or producing fraudulent content. With the rapid development of large language models, this task has increasingly been taken over by AI tools.

This report aims to clarify this threat by disclosing the business model and blockchain address activities of specific "software development" service providers.

Material Provider

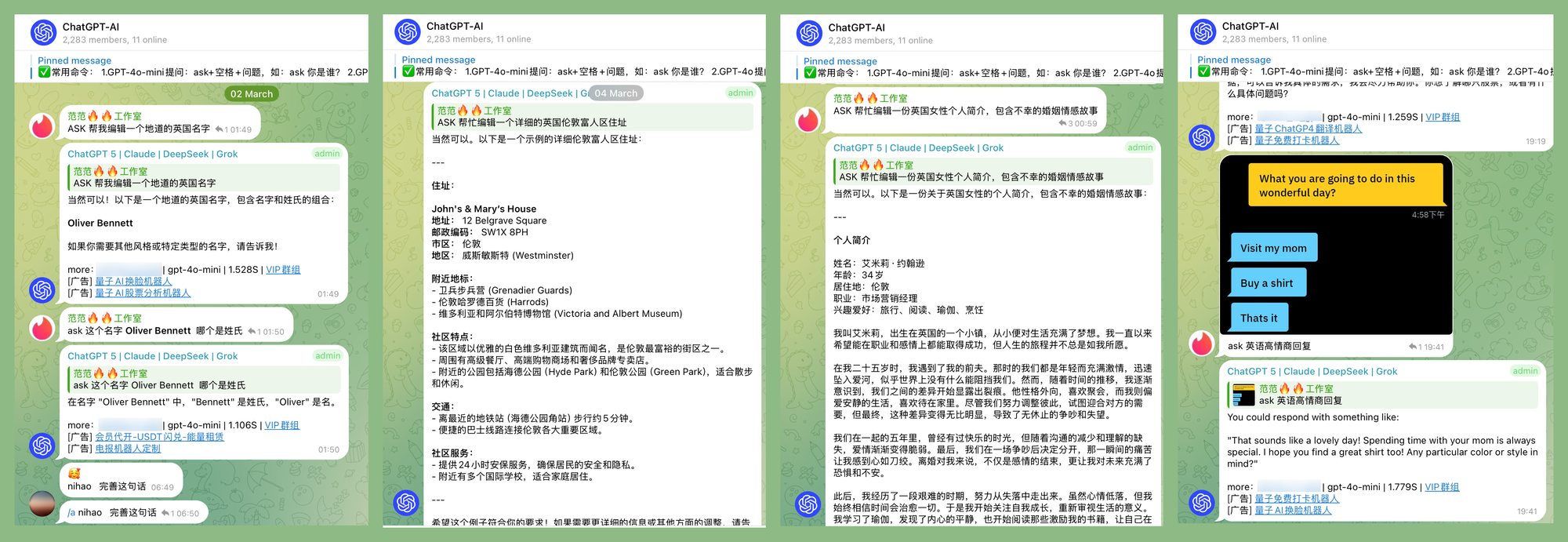

Take persona creation as an example: fraudsters rely on large volumes of photos and captions to craft a sophisticated lifestyle or wealthy identity, with the intent to deceive and manipulate their targets.

The images above are from three Telegram scam material channels. Please note that these are not ordinary landscape photos—they are fraud materials specifically produced for online scam operators. Such photos are usually paired with matching captions, allowing fraudsters to easily copy and send them to social media platforms for deception.

In addition to social media materials, these groups also provide daily chat scripts and investment scam playbooks used to lure victims. As shown in the images above, one such group offers a large number of scripted tutorials, including but not limited to: how to develop romantic relationships with victims from specific countries, major investment methods in those countries, and how to naturally guide victims into the scam without raising suspicion.

Such communities are widespread on Telegram and maintain high-intensity daily updates.

Dark AI as a Service

Even with such an extensive variety of materials, this method of supplying content still fails to address key problems fraudsters face during online chats: stiff grammar, inability to adapt spontaneously, and excessively high labor costs.

Large language models have effectively overcome these shortcomings. Today, traditional app developershave even shifted their business to provide dark AI services for the fraud industry.

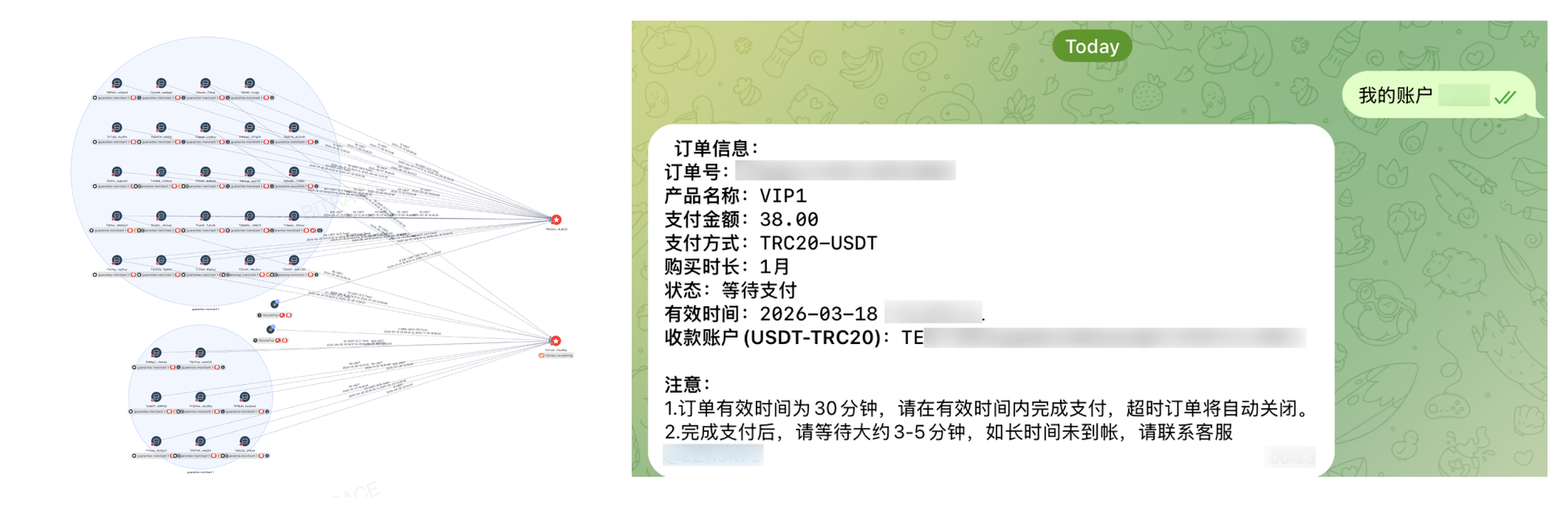

A typical business model involves: establishing public channels to offer free aggregated AI services to attract operators from the cybercrime underground; then converting them into paying customers for computing power packages, underground industry bots, and malicious software development services.

Take the public community of a service provider called Quantum Technology as an example. A large number of cybercrime underground practitioners directly use the community bot provided by the service provider to generate fraudulent materials. As shown in the image above, fraudsters have successfully created a fake identity of a wealthy divorced woman living in London, UK through this service. Under the guidance of AI, they use this identity to conduct cross-language conversations with potential victims.

According to the public notice in the service provider’s community, higher conversation quotas can be obtained via paid subscriptions. The fees range from 9 USDT to 159 USDT per month, depending on the VIP level.

Meanwhile, the service provider also offers Telegram community bot services and custom development. According to its official website, such bots are mostly used for illegal activities including escrow services for unregulated cryptocurrency transactions, illegal online gambling, and fraud.

Crypto Fund Threats

All services and products offered by Quantum Technology are paid for exclusively with the cryptocurrency USDT. By testing payments through its order bot, multiple primary receiving wallet addresses can be obtained.

Data analysis shows that 18% of the USDT received by the service provider’s addresses for its paid aggregated AI services originates from Huionepay — a sanctioned crypto payment processor — as well as from other merchant groups facilitating unregulated cryptocurrency transactions. This funding composition indicates that its key clientele consists of cybercrime underground operators.

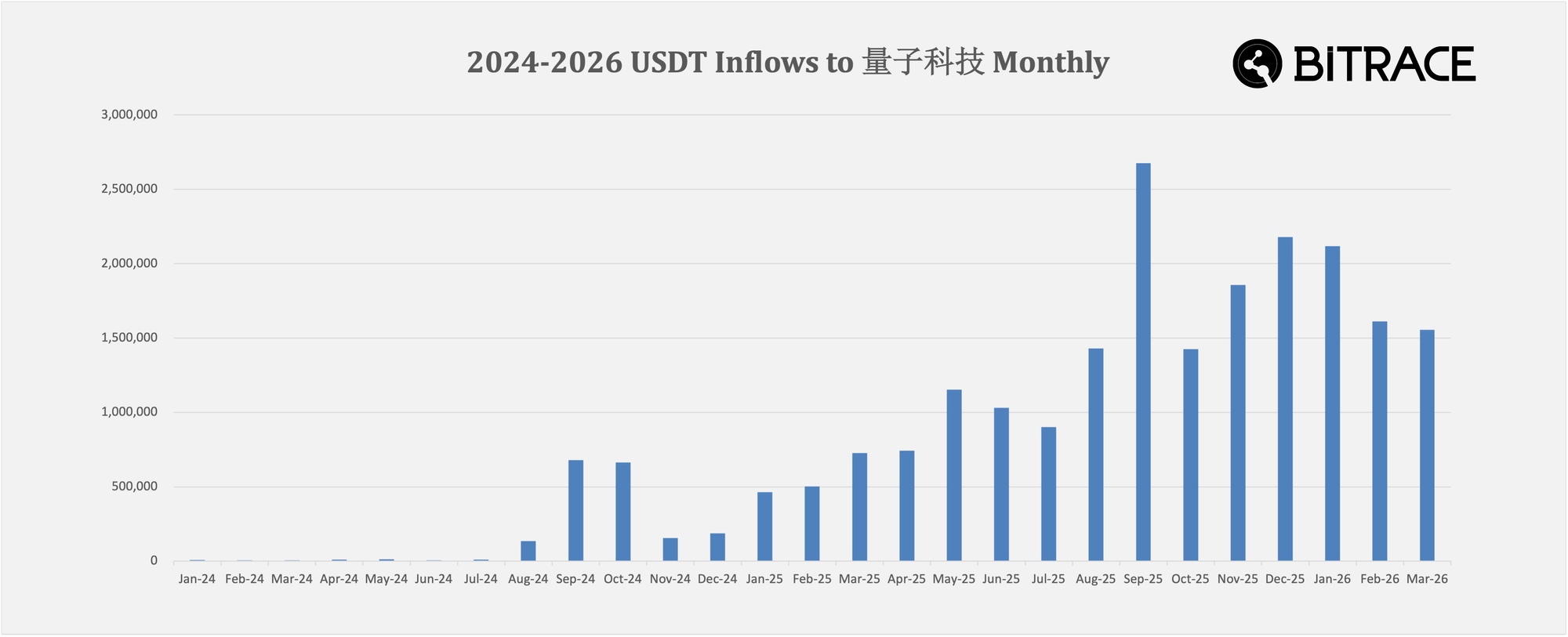

When including addresses associated with software development, gambling bot customization, and illegal cryptocurrency exchange services in the analysis, the service provider’s business volume is shown to be increasing month by month. From January 2024 to March 2026, it collected a total of at least 22,000,000 USDT.

About Bitrace

Bitrace is a leading Web3 regulatory technology company headquartered in Hong Kong, serving the Asia-Pacific region.

By leveraging AI and big data technologies, Bitrace enables more accurate and efficient detection and monitoring of on-chain risks and criminal activities. It provides financial institutions, exchanges, payment companies, and regulatory and law enforcement agencies with professional solutions for on-chain fund tracing and anti-money laundering (AML) compliance.

Bitrace focuses on cryptocurrency crime investigations and maintains close cooperation with regulatory and law enforcement authorities, including the Hong Kong Police Force and Hong Kong Customs. It also collaborates with law enforcement agencies and Web3 enterprises worldwide.

To date, Bitrace has supported thousands of cases, monitored hundreds of billions in high-risk funds, and successfully helped recover billions of US dollars in losses.

Contact us:

Website: www.bitrace.io

Email: bd@bitrace.io

Twitter: @Bitrace_team

LinkedIn:@bitrace tech